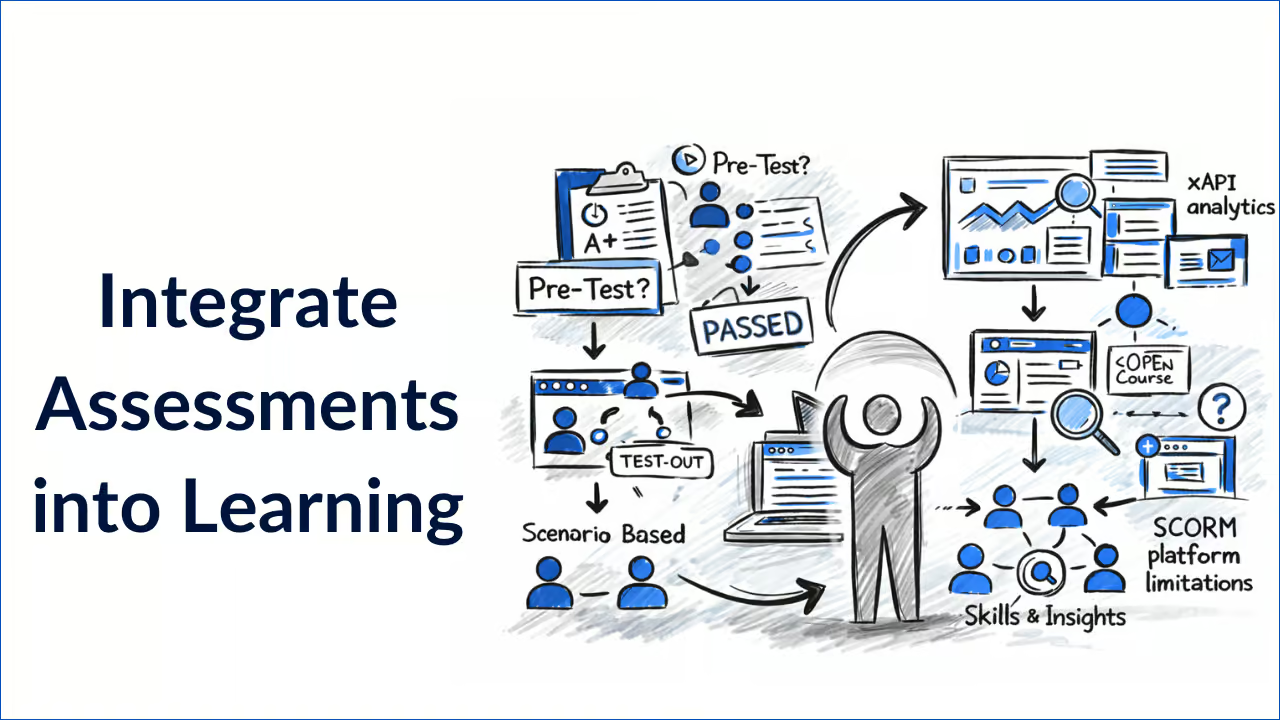

How to Optimize Your Learning with a Smarter Approach to Assessments

Assessments aren’t just a final checkpoint to prove learning happened. When designed well, they actively drive learning, save learners time, and give learning teams far richer insight into capability. From pre-tests and test-out options to scenario-based questions and deep xAPI analytics, modern assessment strategies can transform learning from a passive experience into a personalized, efficient journey.

In this article, we explore how assessments can be used to optimize learning outcomes, why flexibility and context matter, and how dominKnow | ONE supports a smarter, data-rich approach to assessment design.

How can I use assessments as a learning tool (not just proof of learning)?

Traditionally, assessments and testing have been treated as the finish line: a test at the end of a course to confirm completion. But this approach misses a huge opportunity.

Well-designed assessments:

- Reinforce learning through retrieval practice

- Identify knowledge gaps early

- Prevent unnecessary training for learners who already know the material

- Provide actionable data for L&D and the business

In short, assessments are not just about learning – they are part of learning, and ignoring this key point means you’re missing out on a fantastic learning opportunity for your audience.

How can I use pre-tests to optimize learning time?

Why pre-testing matters

Pre-tests allow learners to demonstrate existing knowledge before starting a course. For instance, if you’re introducing a new software program to the organization, you may want to know who has used the program before, and their levels of proficiency.

When used thoughtfully, pre-tests:

- Respect learner time

- Reduce frustration and disengagement

- Focus learning on genuine skill gaps

Taking the example of the new software program pre-test, you can invite employees to engage in a software simulation to prove their existing knowledge of the system. This will ensure you don’t waste time teaching experienced users the basics, and equally that inexperienced users can start from the beginning of the course. This also personalizes the learning experience for each user, rather than making assumptions.

In dominKnow | ONE, pre-tests can use the same randomized item banks as post-tests, ensuring consistent and reliable measurement. You can also set the pre-test to automatically end and move learners into the course if they can no longer achieve a passing score. This allows you to directly compare pre- and post-learning knowledge – while minimizing unnecessary time spent in the pre-test.

Why you should explain the purpose of the pre-test

An intro screen can be used to clearly explain:

- Why the learner is being assessed upfront

- Whether the pre-test is informational or allows them to “test out” (if they complete the pre-test to a certain standard, they don’t need to complete the full training)

- What happens if they “pass” or “fail”, or how the results will be used

Setting expectations upfront improves learner trust and engagement, which in turn provides more useful results. For instance, an employee with solid prior knowledge of the topic will feel more inclined to ensure their knowledge is accurately reflected, rather than simply clicking randomly through the pre-test to get through it faster.

With dominKnow | ONE, your learning team can take this a step further and reuse these intro screens across multiple courses. If anything changes or the team has an idea on how to improve this information, they can make an update in one course, and all courses that reuse that intro screen will automatically have that update.

What are some test-out options that balance trust and control?

Not every organization is comfortable with letting learners fully test out of a course – especially in regulated or compliance-heavy environments. That’s why flexibility matters.

With a robust authoring and Learning Content Management System (LCMS) solution like dominKnow | ONE, you can:

- Enable (or disable) full test-out functionality

- Use pre-tests for informational purposes only

- Set different passing scores for pre-tests vs post-tests (for instance, if you want to require a higher passing score for pre-tests to ensure that only the most knowledgeable learners are allowed to test out from the pre-test stage)

- Automatically exit learners from a pre-test if they clearly won’t pass, so no time is wasted

This allows L&D teams to balance learner autonomy with organizational risk tolerance. If you’re in a high-consequence industry, such as finance or healthcare, you may prefer to ensure everyone completes the full training course, whereas for lower-stakes learning, testing out at the pre-test stage could be more appropriate.

Testing out of sections (not just whole courses)

Learning isn’t always all-or-nothing.

dominKnow | ONE allows assessments to be aligned to:

- Entire courses

- Individual chapters or modules

- Specific sections tied to learning objectives

Learners can test out of parts of a course while still completing sections where knowledge gaps exist. This creates a far more personalized and efficient learning experience. For instance, a learner with an intermediate understanding of a topic can test out of the beginner-level content, but will still be required to complete more advanced sections of the training to ensure comprehensive knowledge.

How can I use learning objectives to drive my assessment design?

A common mistake in assessment design is forcing learning needs into limited question types (often multiple choice) and failing to connect individual pools of questions to the learning objectives covered in the course.

The better approach is to let learning needs drive the assessment design.

In dominKnow | ONE:

- Pools of questions are tied directly to learning objectives

- Randomization of learning objectives and within learning objectives ensures all objectives are assessed fairly (helping to minimize primacy bias, recency bias, or learner fatigue)

- Assessment coverage remains consistent, even with shuffled questions

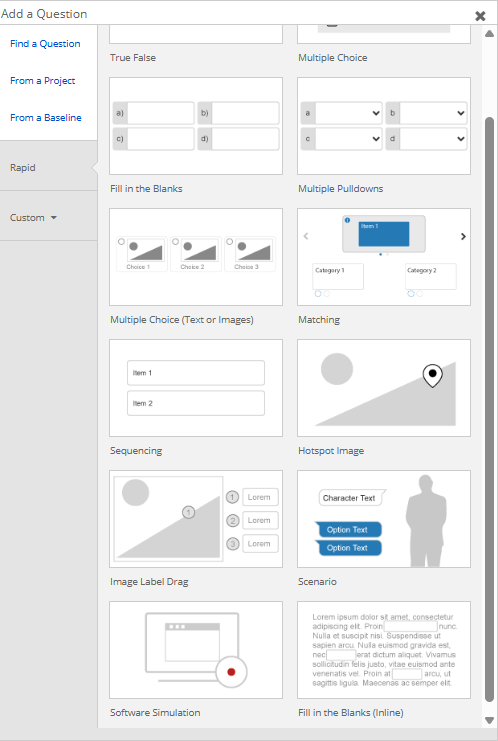

- Authors can choose from over 10 different types of questions offering multiple variations for how and what types of content are used for questions, answers, and feedback

This ensures assessments can be designed so they truly reflect what is being taught – not just what is easiest to test. It also helps alleviate learner boredom after completing endless rounds of multiple choice questions, keeping them engaged in the learning for longer.

What are some assessment question types beyond multiple choice?

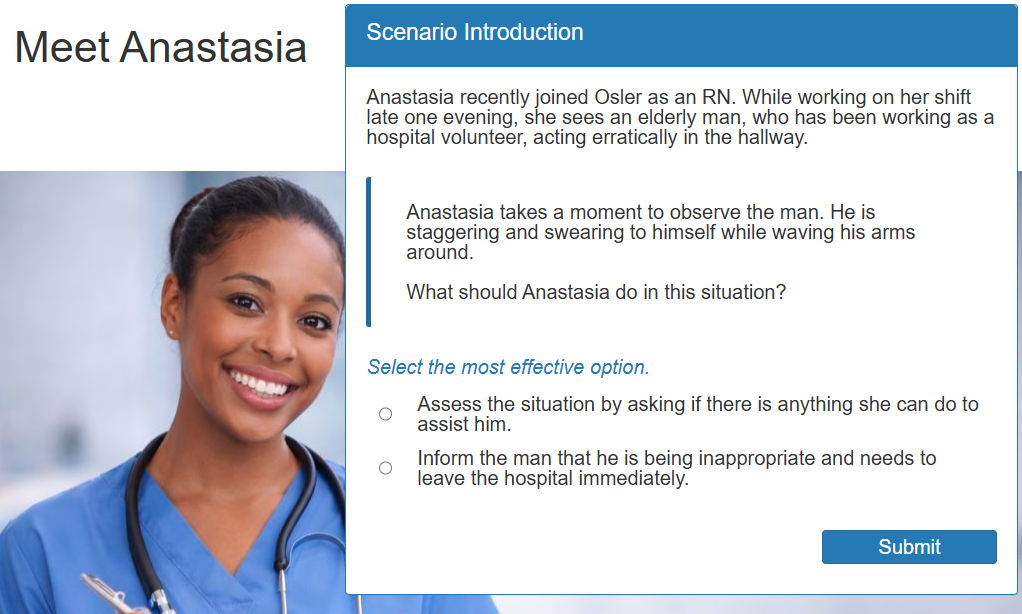

Speaking of multiple choice questions, a common trap instructional designers fall into is relying too heavily on “A, B, or C”-style quizzes. Real-world capability often can’t be measured with simple multiple choice questions, meaning we end up missing out on testing a whole range of skills and levels of understanding.

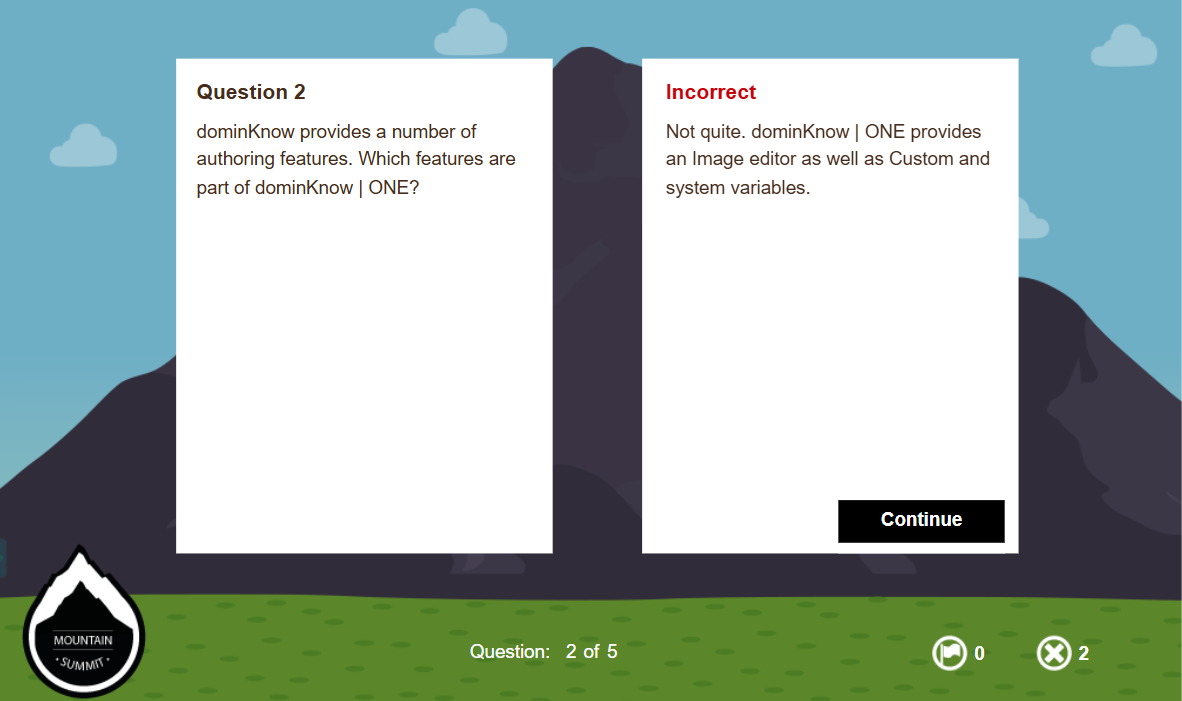

That’s why dominKnow | ONE supports a wide range of assessment types, including:

- Scenario-based questions

- Software simulations and assessments

- Practice questions designed for learning, not grading

- Multiple cumulative questions that simulate an experience and track results through variables

This flexibility makes it possible to assess decision-making, application, and performance – not just recall. It also gives the L&D team a more holistic insight into the level of understanding, enables the learning team to provide more realistic experiences, and helps remove the ability for learners to “lucky guess” their way through a course, as more rigorous assessment types put their real skills to the test.

Using Articulate Rise and struggling to create engaging assessments? dominKnow can convert your Rise content into fully editable dominKnow | ONE content. Once converted, you can then take your content to the next level, no longer hampered by the limitations of Articulate Rise. dominKnow | ONE assessment conversion from Articulate Rise.

How does feedback support learning?

Feedback is where assessments become powerful learning moments.

dominKnow | ONE offers highly flexible feedback options:

- Overall test feedback

- Default feedback rules

- Individual item-level feedback (e.g. to correct wrong answers in the moment)

- Rich media feedback (not just text)

- Control over when feedback is shown (e.g. only after passing)

This allows feedback strategies to align with learning goals, whether the focus is coaching, reinforcement, or formal validation and turns an assessment into both a way to measure knowledge and a learning experience.

How do I access deep assessment analytics with xAPI?

Most SCORM-based learning systems track assessment data at a very high level – often limited to a single score and individual test question responses. While the assessment item interactions can provide some valuable insight, this is the end of the road. For teams wanting to have a deeper understanding of the entire learning experience, this can feel like a “blunt measure” of what is often highly nuanced data. Some of these limitations are not as obvious. For example, a SCORM-based platform will overwrite pre-test quiz scores with the post-test scores, making it almost impossible to compare scores without excessive manual effort.

dominKnow | ONE significantly improves assessment analytics (and, therefore, learning analytics) by capturing detailed xAPI data, including:

- Pre-test and post-test scores tracked separately

- Tracking of individual module (chapter) questions along with the course’s overall score

- Details on the module and learning object to which each question is linked

- Detailed data for all test and practice questions

This level of insight allows L&D teams to:

- Compare pre- and post-learning performance accurately

- Identify weak objectives or content areas

- See where practice questions and their associated learning are being understood and identify areas of concern

- Make data-driven improvements to learning design

For instance, dominKnow’s xAPI data may reveal that the improvement in pre- and post-test scores is minimal, which could indicate that the course content isn’t having the intended effect or the questions aren’t properly assessing the content. It may also reveal that everyone struggles on a specific section of the assessment, which could suggest that this section needs to be revisited for content clarity.

How can I improve my assessments with reusability, branding, and single-source content design?

Effective assessment design isn’t just about creating good questions – it’s about building them in a way that saves time, reduces errors, and keeps learning consistent across your organization.

With dominKnow | ONE, you can:

- Reuse Learning Objects and their assessment questions across multiple courses

If two courses share objectives, there’s no need to recreate the same content or quiz items. Update a question once, and every instance updates automatically. This is especially valuable when building cumulative certification exams – you can reuse approved Learning Objects or question banks from existing courses to create a final assessment, ensuring you’re testing the same material without starting from scratch. - Maintain a consistent look and feel, even when content is reused

While themes control branding and visual design, each reused asset automatically adopts the theme of the course it sits within. That means your content can be reused widely while still matching the branding and design of each specific program for a consistent learning experience with no need to manually update the look and feel every time. - Use single-source design to personalise assessments for different audiences

Tag entire assessments, objectives, or individual questions to create tailored experiences from a single course build. Whether you’re serving different roles, regions, or compliance requirements, you can manage variations centrally without duplicating content thanks to single-source design.

This approach keeps assessments aligned, accurate, and up to date. It reduces administrative overhead, prevents version control issues, and eliminates unnecessary rework. When a set of approved questions already does the job, reuse ensures consistency, and frees up your L&D team to focus on higher-value work instead of constantly reinventing the wheel.

FAQs about assessments

Are assessments only for measuring learning outcomes?

No. Assessments also support learning by reinforcing knowledge, identifying gaps, encouraging new ways of thinking about content, and personalizing the learner journey.

Can pre-tests replace full courses?

In some cases, yes. With test-out options, learners who demonstrate competence can skip unnecessary content while still meeting organizational requirements.

How does dominKnow | ONE expand beyond standard SCORM-based assessment tracking?

SCORM typically allows only a single score per course. dominKnow uses xAPI to track pre-test, post-test, and detailed item-level data separately as well as practice questions.

Can assessments match our branding and learning context?

Yes. dominKnow assessments are highly customizable in both design and experience, ensuring alignment with branding, scenarios, and learner expectations.

Key takeaways

If you’re looking for ways to improve your organization’s approach to assessments, here are the key things to consider:

- Use assessments as a learning tool, not just as a way to measure knowledge acquisition

- Pre-testing can make the assessment process more efficient for learners

- Referring to your learning objectives to drive assessment design leads to better learning outcomes

- Think beyond multiple-choice questions – scenarios, simulations, and gamified activities offer comprehensive testing opportunities

- Use feedback to support learning with personalized, informative responses to correct and incorrect answers in the moment

Let learning needs drive your assessment design

Assessments are too important to be treated as an afterthought.

When designed with intention, they:

- Improve learner experience

- Save time and cost

- Deliver richer insight into capability

- Actively enhance learning itself

The key is flexibility. Instead of asking “How do I turn this into a multiple-choice question?”, the better question is:

“What do learners need to be able to do – and how can assessment best support that?”

With a powerful, flexible assessment approach, assessments become not just a measure of learning, but a core driver of it.

Ready to see how better assessments can transform your learning program? Find out how easy it can be to build more creative, effective, and engaging assessments with dominKnow | ONE with your free trial.

.svg)